aispm

AI Security Posture Management (AISPM)

The AISPM domain within Kscope KDefend secures your AI assets — models, agents, prompts, training datasets, and AI-powered pipelines. It aggregates findings from AI-specific IAM analysis, static analysis (SAST), and dynamic testing (DAST) through the context graph to detect vulnerabilities unique to AI systems such as prompt injection, model poisoning, and excessive agent permissions.

How It Works

- AI Blueprints ingest metadata from AI services — model registries, agent configurations, prompt templates, training datasets, and AI platform APIs

- Context Graph maps relationships between AI assets, their consumers, data flows, and access patterns

- AISPM Analyzers detect misconfigurations, excessive permissions, and vulnerabilities specific to AI systems

- Insight Feeds surface prioritized findings scored by AI-specific threat models and business impact

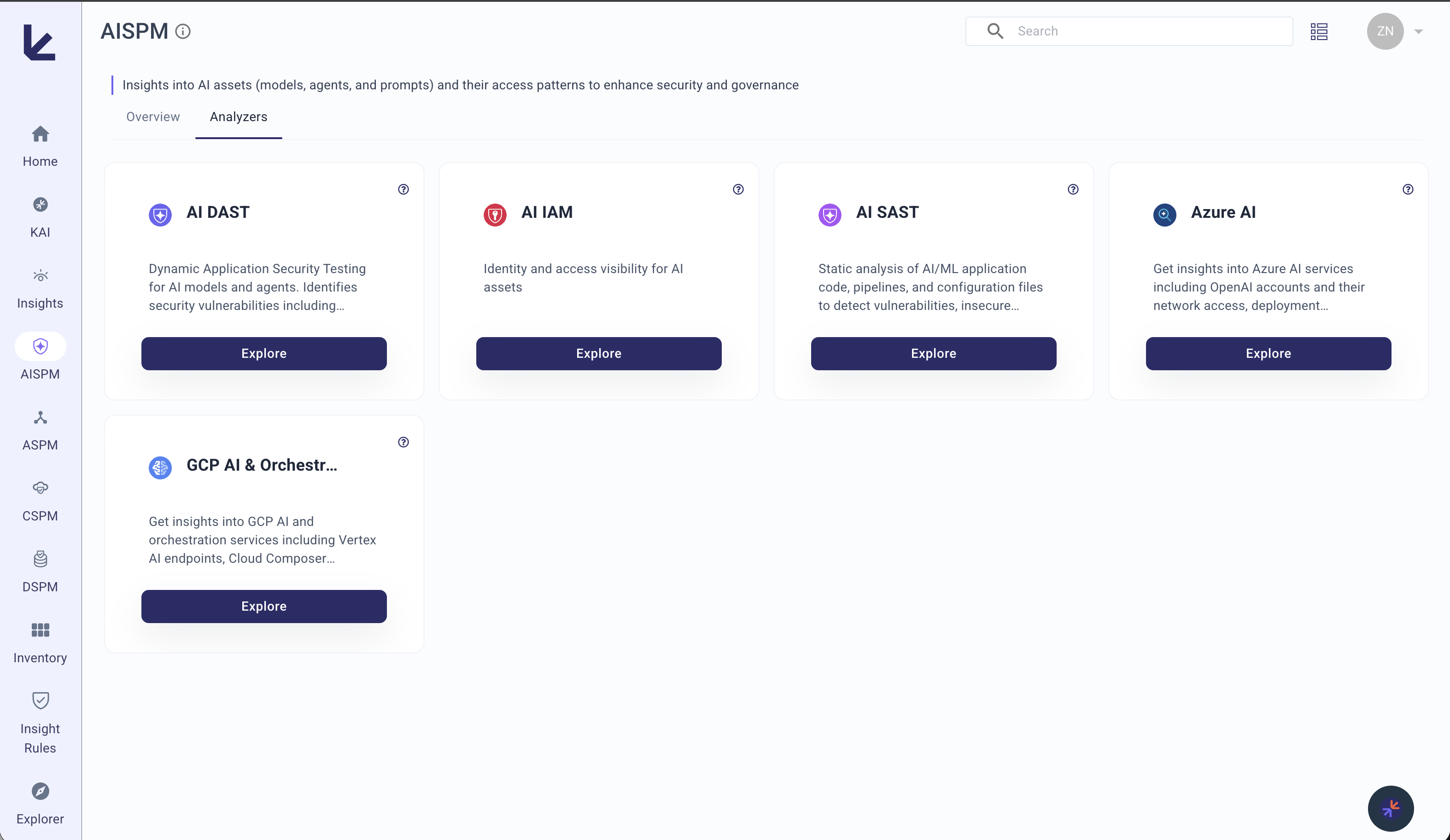

Analyzers

| Analyzer | What it covers | Blueprints |

|---|---|---|

| AI IAM | Overprivileged tokens, long-lived API keys, unauthorized model access, agent permission misuse | GitHub, AI-DAST |

| AI SAST | Insecure AI code patterns, exposed prompts, unsafe eval/code-gen, secrets in AI pipelines | GitHub |

| AI DAST | Prompt injection, jailbreak susceptibility, data exfiltration risks, unsafe outputs, model drift | AI-DAST |

| Azure AI | Azure AI services security — Cognitive Services, OpenAI, ML workspaces, AI agent configurations | Azure |

| GCP AI & Orchestration | GCP Vertex AI, Model Garden, AI Platform security and access controls | GCP |

What It Detects

Agent Security

- AI agents with excessive permissions or insecure configurations

- Overprivileged API tokens and long-lived access keys for AI services

- Unauthorized model access and agent permission misuse

- Unmonitored autonomous agent behavior

Prompt Vulnerabilities

- Prompt templates susceptible to injection attacks

- Jailbreak vectors in deployed AI applications

- System instruction leakage through crafted inputs

- Missing input validation and prompt sanitization

Code & Pipeline Risks

- Hardcoded prompts, secrets, and API keys in AI pipelines

- Unsafe code execution flows in AI applications

- Missing output sanitization and guardrails

- Insecure model serving configurations

Data & Model Integrity

- Training datasets containing sensitive information or poisoned data

- Data exfiltration risks via model responses

- Unmonitored model drift and behavioral anomalies

- Missing data provenance and lineage tracking

Key Metrics

| Metric | Description |

|---|---|

| Vulnerable Agents | AI agents with security weaknesses or misconfigurations |

| Apps Using Vulnerable AI Assets | Applications depending on AI components with known vulnerabilities |

| Vulnerable Training Datasets | Datasets with sensitive data, untrusted sources, or poisoned content |

| Vulnerable Prompts | Prompt templates susceptible to injection or exploitation |

| AISPM Security Risk Score | Composite 0–100 score dynamically weighted across all active AISPM analyzers (AI IAM, AI SAST, AI DAST, Azure AI, GCP AI). Only analyzers with a configured blueprint contribute to the score. |

Related Domains

- ASPM — AI applications are software applications. Code vulnerabilities detected by ASPM may affect AI-specific components, and secrets in code may expose AI service credentials.

- CSPM — Cloud misconfigurations in CSPM affect the infrastructure where AI models are deployed and served. IAM policies governing AI service access are correlated across both domains.